Teaching Physics to Neural Networks Removes ‘Chaos Blindness’

For Immediate Release

Researchers from North Carolina State University have discovered that teaching physics to neural networks enables those networks to better adapt to chaos within their environment. The work has implications for improved artificial intelligence (AI) applications ranging from medical diagnostics to automated drone piloting.

Neural networks are an advanced type of AI loosely based on the way that our brains work. Our natural neurons exchange electrical impulses according to the strengths of their connections. Artificial neural networks mimic this behavior by adjusting numerical weights and biases during training sessions to minimize the difference between their actual and desired outputs. For example, a neural network can be trained to identify photos of dogs by sifting through a large number of photos, making a guess about whether the photo is of a dog, seeing how far off it is and then adjusting its weights and biases until they are closer to reality.

The drawback to this neural network training is something called “chaos blindness” – an inability to predict or respond to chaos in a system. Conventional AI is chaos blind. But researchers from NC State’s Nonlinear Artificial Intelligence Laboratory (NAIL) have found that incorporating a Hamiltonian function into neural networks better enables them to “see” chaos within a system and adapt accordingly.

Simply put, the Hamiltonian embodies the complete information about a dynamic physical system – the total amount of all the energies present, kinetic and potential. Picture a swinging pendulum, moving back and forth in space over time. Now look at a snapshot of that pendulum. The snapshot cannot tell you where that pendulum is in its arc or where it is going next. Conventional neural networks operate from a snapshot of the pendulum. Neural networks familiar with the Hamiltonian flow understand the entirety of the pendulum’s movement – where it is, where it will or could be, and the energies involved in its movement.

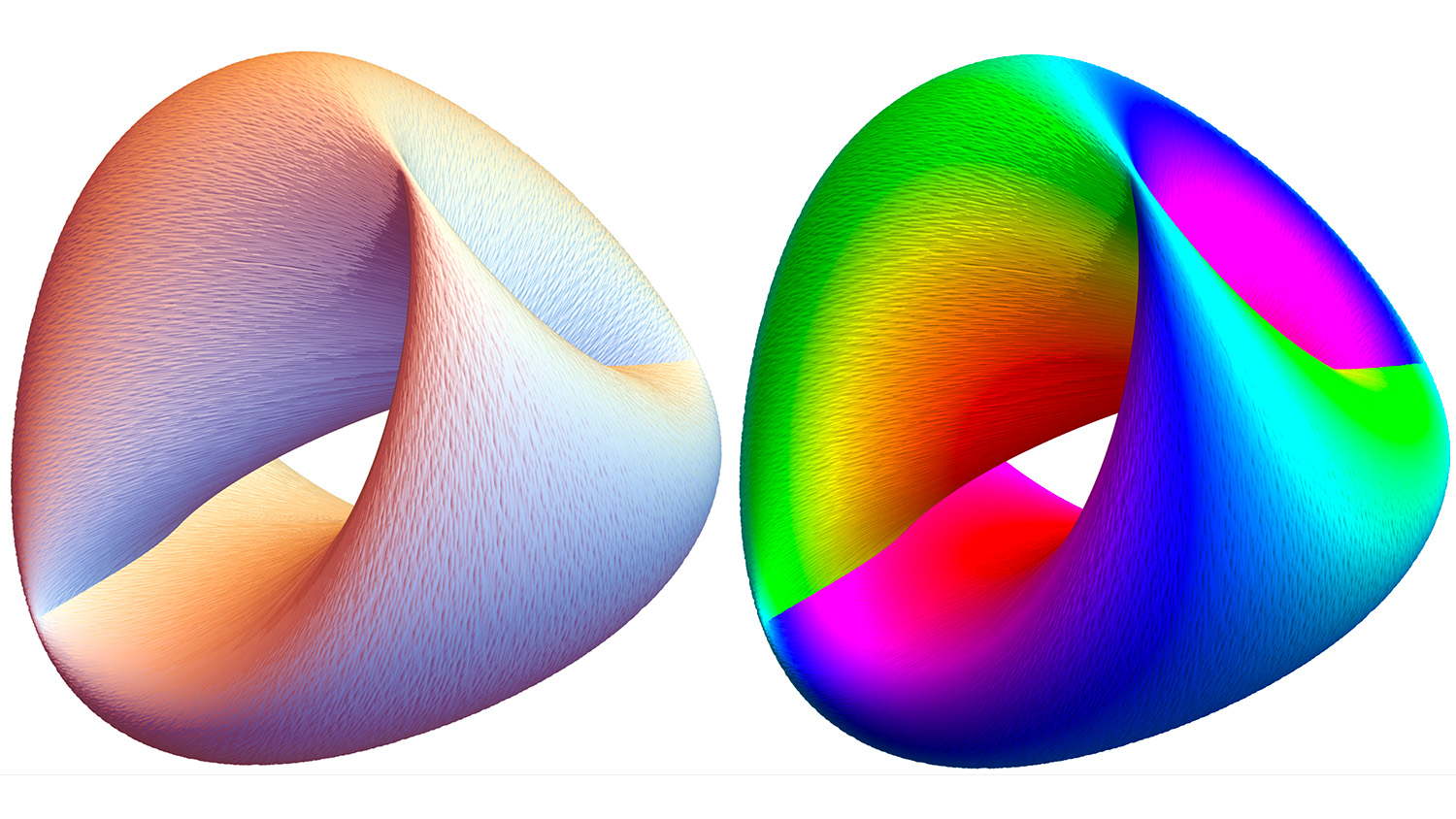

In a proof-of-concept project, the NAIL team incorporated Hamiltonian structure into neural networks, then applied them to a known model of stellar and molecular dynamics called the Hénon-Heiles model. The Hamiltonian neural network accurately predicted the dynamics of the system, even as it moved between order and chaos.

“The Hamiltonian is really the ‘special sauce’ that gives neural networks the ability to learn order and chaos,” says John Lindner, visiting researcher at NAIL, professor of physics at The College of Wooster and corresponding author of a paper describing the work. “With the Hamiltonian, the neural network understands underlying dynamics in a way that a conventional network cannot. This is a first step toward physics-savvy neural networks that could help us solve hard problems.”

The work appears in Physical Review E and is supported in part by the Office of Naval Research (grant N00014-16-1-3066). NC State postdoctoral researcher Anshul Choudhary is first author. Bill Ditto, professor of physics at NC State, is director of NAIL. Visiting researcher Scott Miller; Sudeshna Sinha, from the Indian Institute of Science Education and Research Mohali; and NC State graduate student Elliott Holliday also contributed to the work.

-peake-

Note to editors: An abstract follows.

“Physics enhanced neural networks predict order and chaos”

DOI: 10.1103/PhysRevE.101.062207

Authors: Anshul Choudhary, Elliott G. Holliday, Scott T. Miller, William L. Ditto, North Carolina State University; John F. Lindner, NC State and The College of Wooster; Sudeshna Sinha, NC State and Indian Institute of Science Education and Research Mohali

Published: Online in Physical Review E

Abstract:

Artificial neural networks are universal function approximators. They can forecast dynamics, but they may need impractically many neurons to do so, especially if the dynamics is chaotic. We use neural networks that incorporate Hamiltonian dynamics to efficiently learn phase space orbits even as nonlinear systems transition from order to chaos. We demonstrate Hamiltonian neural networks on a widely-used dynamics benchmark, the Hénon-Heiles potential, and on nonperturbative dynamical billiards. We introspect to elucidate the Hamiltonian neural network forecasting.

- Categories: