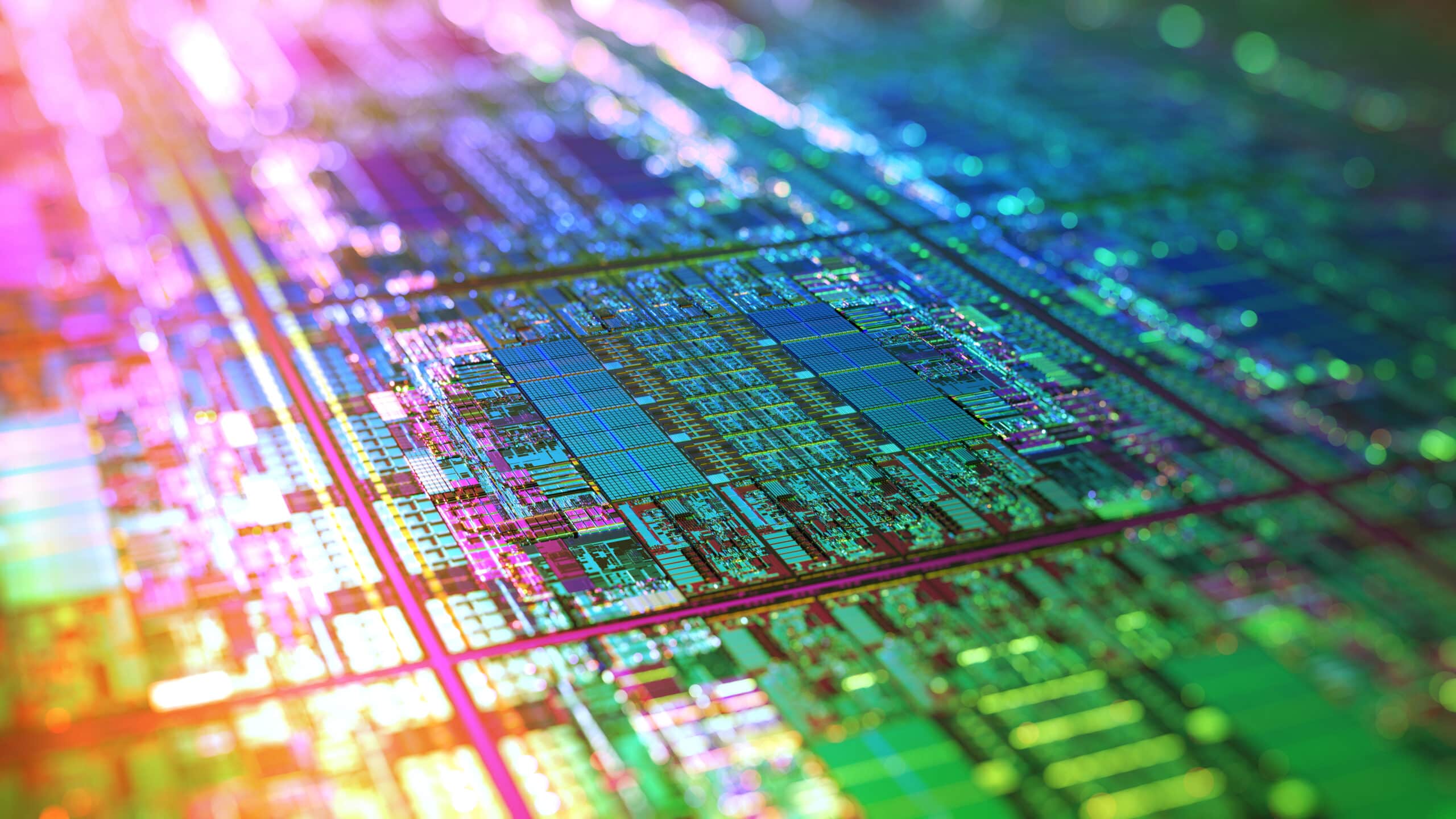

Engineers Boost Computer Processor Performance By Over 20 Percent

Researchers from North Carolina State University have developed a new technique that allows graphics processing units (GPUs) and central processing units (CPUs) on a single chip to collaborate – boosting processor performance by an average of more than 20 percent.

“Chip manufacturers are now creating processors that have a ‘fused architecture,’ meaning that they include CPUs and GPUs on a single chip,” says Dr. Huiyang Zhou, an associate professor of electrical and computer engineering who co-authored a paper on the research. “This approach decreases manufacturing costs and makes computers more energy efficient. However, the CPU cores and GPU cores still work almost exclusively on separate functions. They rarely collaborate to execute any given program, so they aren’t as efficient as they could be. That’s the issue we’re trying to resolve.”

GPUs were initially designed to execute graphics programs, and they are capable of executing many individual functions very quickly. CPUs, or the “brains” of a computer, have less computational power – but are better able to perform more complex tasks.

“Our approach is to allow the GPU cores to execute computational functions, and have CPU cores pre-fetch the data the GPUs will need from off-chip main memory,” Zhou says.

“This is more efficient because it allows CPUs and GPUs to do what they are good at. GPUs are good at performing computations. CPUs are good at making decisions and flexible data retrieval.”

In other words, CPUs and GPUs fetch data from off-chip main memory at approximately the same speed, but GPUs can execute the functions that use that data more quickly. So, if a CPU determines what data a GPU will need in advance, and fetches it from off-chip main memory, that allows the GPU to focus on executing the functions themselves – and the overall process takes less time.

In preliminary testing, Zhou’s team found that its new approach improved fused processor performance by an average of 21.4 percent.

This approach has not been possible in the past, Zhou adds, because CPUs and GPUs were located on separate chips.

The paper, “CPU-Assisted GPGPU on Fused CPU-GPU Architectures,” will be presented Feb. 27 at the 18th International Symposium on High Performance Computer Architecture, in New Orleans. The paper was co-authored by NC State Ph.D. students Yi Yang and Ping Xiang, and by Mike Mantor of Advanced Micro Devices (AMD). The research was funded by the National Science Foundation and AMD.

-shipman-

Note to editors: The paper abstract follows.

“CPU-Assisted GPGPU on Fused CPU-GPU Architectures”

Authors: Yi Yang, Ping Xiang, Huiyang Zhou, North Carolina State University; Mike Mantor, Advanced Micro Devices

Presented: Feb. 27, 18th International Symposium on High Performance Computer Architecture, New Orleans

Abstract: This paper presents a novel approach to utilize the CPU resource to facilitate the execution of GPGPU programs on fused CPU-GPU architectures. In our model of fused architectures, the GPU and the CPU are integrated on the same die and share the on-chip L3 cache and off-chip memory, similar to the latest Intel Sandy Bridge and AMD accelerated processing unit (APU) platforms. In our proposed CPU-assisted GPGPU, after the CPU launches a GPU program, it executes a pre-execution program, which is generated automatically from the GPU kernel using our proposed compiler algorithms and contains memory access instructions of the GPU kernel for multiple threadblocks. The CPU pre-execution program runs ahead of GPU threads because (1) the CPU pre-execution thread only contains memory fetch instructions from GPU kernels and not floating-point computations, and (2) the CPU runs at higher frequencies and exploits higher degrees of instruction-level parallelism than GPU scalar cores. We also leverage the prefetcher at the L2-cache on the CPU side to increase the memory traffic from CPU. As a result, the memory accesses of GPU threads hit in the L3 cache and their latency can be drastically reduced. Since our pre-execution is directly controlled by user-level applications, it enjoys both high accuracy and flexibility. Our experiments on a set of benchmarks show that our proposed preexecution improves the performance by up to 113% and 21.4% on average.

- Categories: